Testcase

I implemented an AI-"Test"-Environment. One might think this is a bit "to much", and you are probably right on the other hand my real-life job is closely related to software testing, so it probably comes only natural The basic underlying idea:

games can be saved (stateless - as described somewhere else...)

these games can be loaded as a "befor" and "after" scenario

one of the opponents is a "fixed" AI which playes the role of the opponent of the to be tested AI

this fixed AI must have a "fixed" behaviour, in order for the test to be reproducable

the other AI will be the one to be tested

if the after scenario that was defined by the testcase is exactly as the one produced be the to be tested AI than the test is passed / otherwise failed

Testcases as such can be defined, commented and collected. You can edit gamestates. You can import gamestates. You can view gamestates (window, which behaves like a match) Testcases can be collected to a "TestRun". TestRuns can be run on to be tested AI in sequential order. OutPut can be viewed. Thus by collecting usefull testcases I can automatically verify:

that an AI does after changes still do as expected

that cards are implemented, and AI does handle them as expected

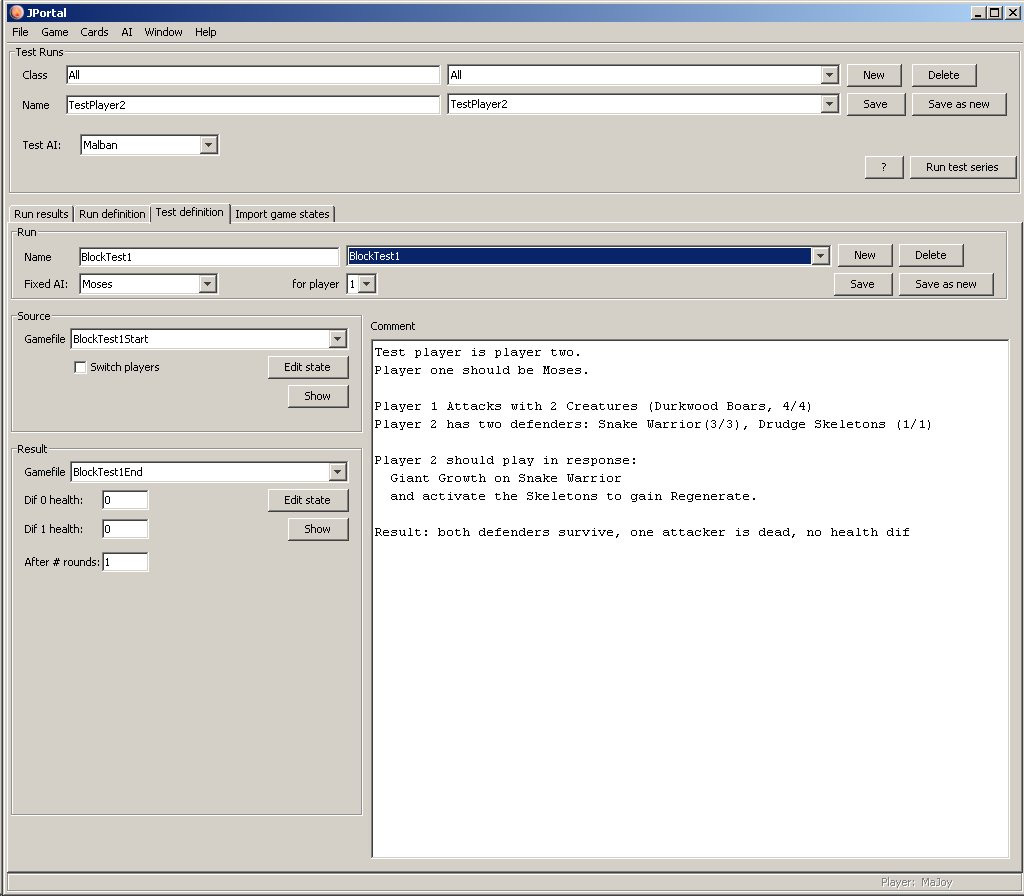

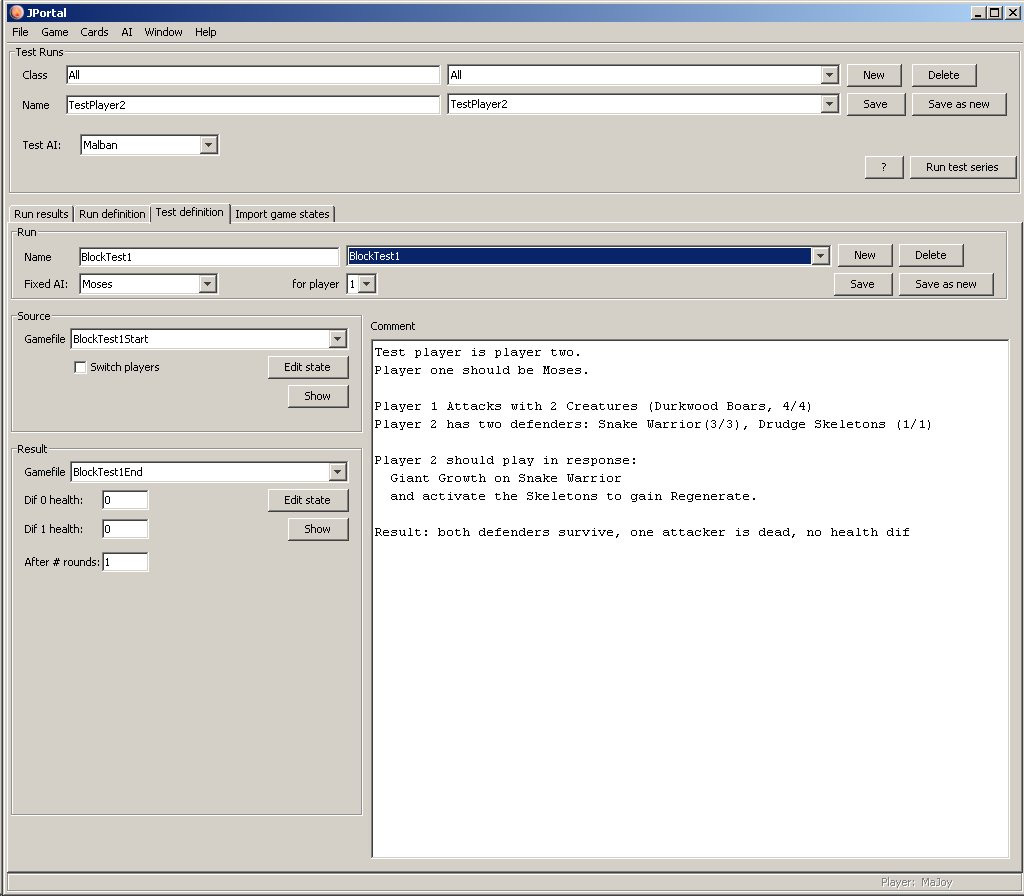

Example: To be tested AI (EAI): Malban (what else :-

TestCase name is BlockTest1

Fixed AI is "Moses", Fixed AI is player 1 (this automatically means, the to be tested AI will be player 2)

original gamestate is named BlockTest1Start

target gamestate is named BlockTest1End

no player has any life difference

testcase has duration of one game round

Testcase

(Note: If Enhanced AI was player 1, he would play "Undo" - and the result would be totally different!)

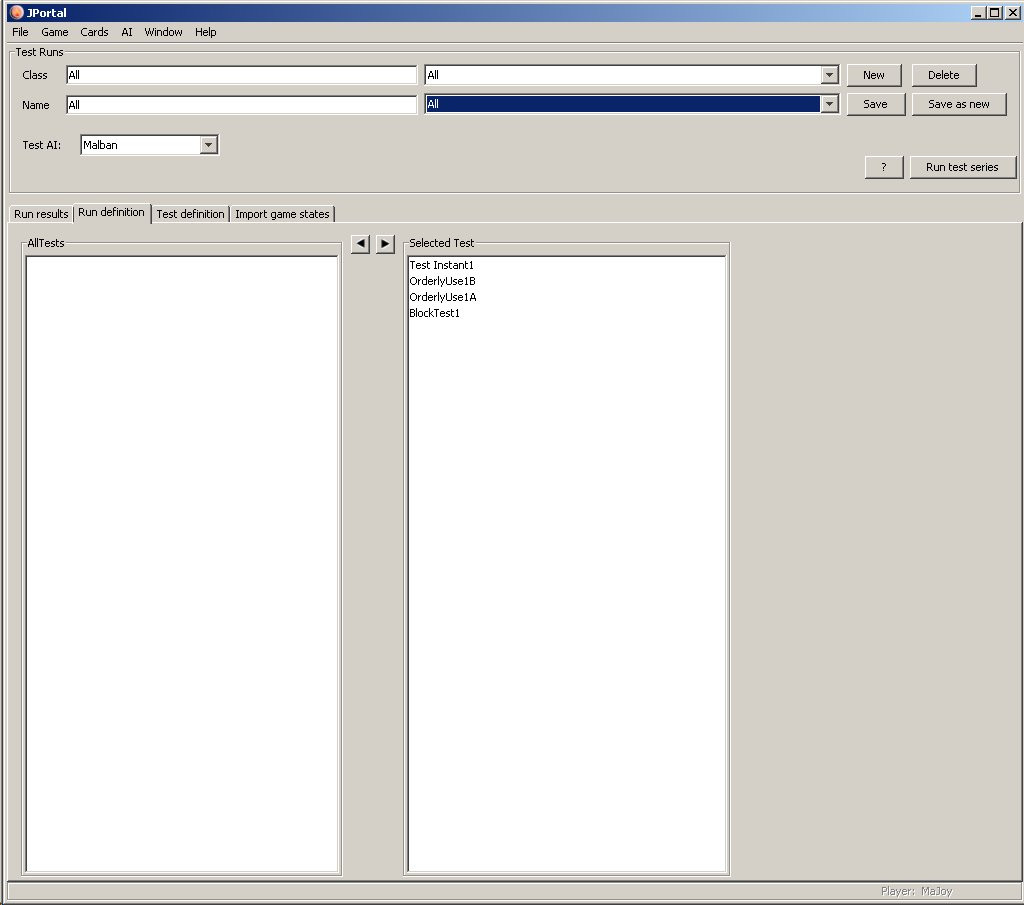

TestRun definition looks like this:

Testrun

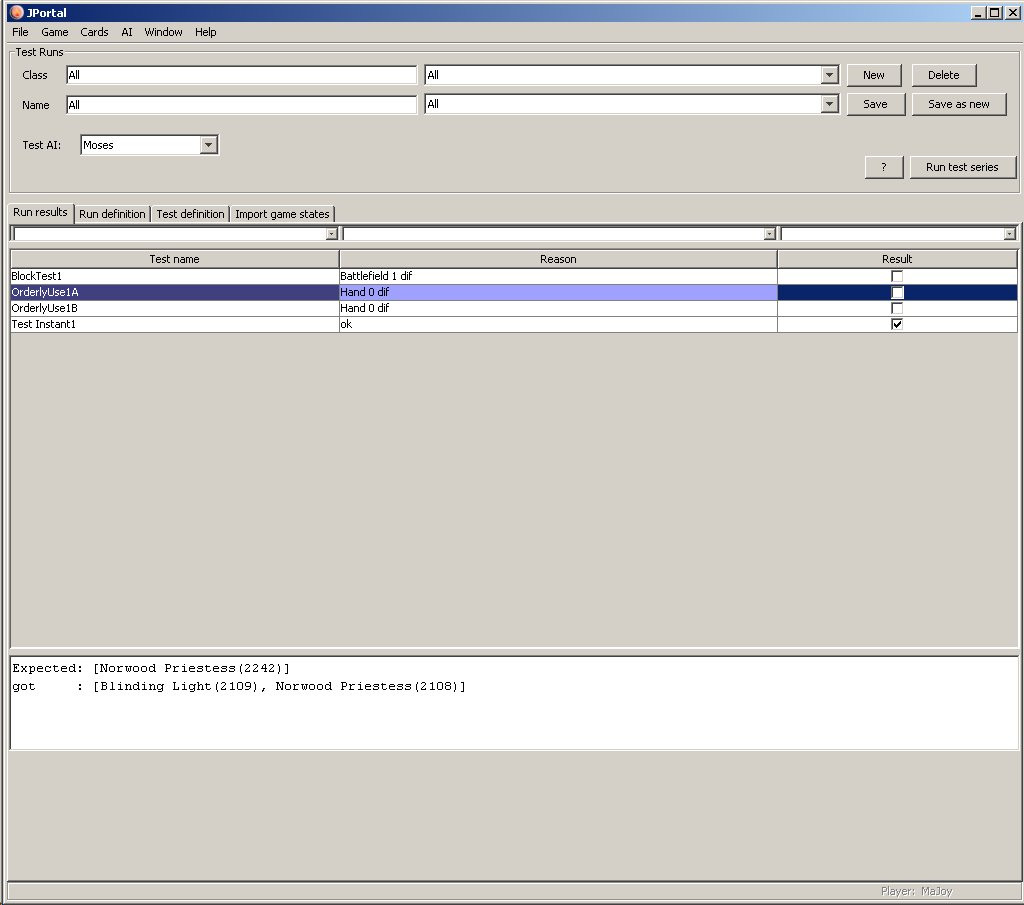

And finally - the results of a run: (Tested Moses, who does not pass the tests (only one):

Test results